Okay, folks. Time to ‘fess up.

If you are anything like me, you spent the first leg of your WordPress development years “cowboy coding”—that is, making changes wildly on live sites, urgently testing and firing them up with FTP, often resulting in 500 Internal Server Error messages and sitewide breaks all visible to your esteemed visitors.

While this was absolutely thrilling as adrenaline pumped through your fingers, pounding in that forgotten semicolon, on sites with more than 0 visitors (who actually noticed the downtime) this would start to become a problem. If a tree falls and no one is there to hear it, does it make a sound? Depends on the theory of humanity to which you subscribe.

However, if a site crashes and someone is there to see it, they will certainly make a sound.

WordPress Continuous Deployment Done Wrong

Enter staging sites, duplicate WordPress installations (at least in theory) where changes could be made, then made again on the live site once all was confirmed working. While this quieted down visitors, it started to cause us developers to make some noise. Suddenly, we needed to keep track of two sites, ensure that the code is manually synced between them, and test everything again to make sure it is working on the live site. Long, messy lists of “make sure to change this on live” and “make sure to toggle this on the staging site before copying the code over” were nerve-wracking, to say the least. Backups of this system were a nightmare as well—a slew of folders named “my-theme-staging-1” and “my-theme-live-before-menu-restyle-3” and so on.

There had to be a better way, and there was.

There was Git, which gives perfect source control and other features to developers. Using version control for WordPress installations instantly expedited and cleaned up development, as hours were no longer spent backing up in a per-developer system but actually on coding. Changes were saved and I could finally add meaningful messages to my work, worlds of difference from “my-theme-4-v2.”

While the codebase was a lot cleaner, the issue still remained of actual deployments and ensuring the site in question was using the latest code—enter opportunity-for-human-error. Still relying on manual FTP uploads overwriting the previous code didn’t feel great. While other CI/CD services existed, many of them came with a substantial price tag and large amount of setup—server refiguring, relying on yet another service for even the simplest website, learning the other service’s whole “way of doing things” and all the idiosyncrasies that come with it.

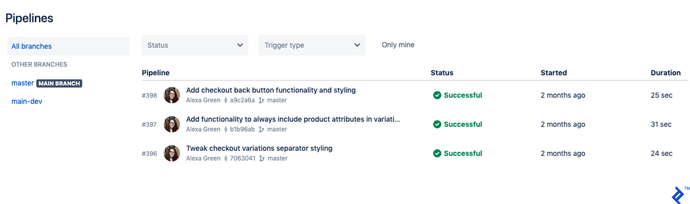

While similar setups to this tutorial can be done with GitHub/GitLab and other services, I had put my eggs in the Atlassian basket early on due to their free private repositories (which has only been a recent offering from GitHub). When Bitbucket introduced their Pipelines and Deployments services, it allowed new code to automatically deploy to staging or production sites (or any other site in-between) without reuploading via FTP or using an external service. Devs could now use all of the values of source control in their WordPress development and instantly send those changes to the appropriate destinations with no additional clicks or keystrokes, with the status of everything all visible via one dashboard. This ensures everything stays in sync and, at a glance, lets you know exactly what code each site is running. Plus, the pricing for Bitbucket’s build minutes is incredibly affordable—with 50 minutes free per month and an option for a “Free with Overages” plan.

It took a bit of startup time to work out how to best use branches and other features of source control in this new model and the particulars of the Bitbucket Pipelines setup. Here’s the process I use for starting new WordPress projects. I won’t go into all of the nitty-gritty on setting up git and WordPress installation since great resources for that are just a Google search away. In the end, the content flow will be something like this:

The Alexa Green WordPress Depoyment Routine

The steps outlined here should be performed as needed:

On the Client’s Server

Set up a domain for the live site (e.g., clientsite.com) and subdomain for staging (e.g., staging.clientsite.com).

Install WordPress on both the live site and staging subdomain. This can be done via cPanel/Softaculous (if the client’s hosting has this) or by downloading from wordpress.org. Ensure that there are separate databases for live and staging respectively.

On Bitbucket.com

Set up a new repository. Include a .README to get us up and going.

In the repository, Settings > Pipelines > Settings then check Enable Pipelines .

In Settings > Pipelines > Repository variables , enter the following:

Name: FTP_username

Value: The client FTP username

Name: FTP_password

Value: The client FTP password

Go back to Pipelines > Settings and click the Configure bitbucket-pipelines.yml button. Select PHP as the language on the following page. You’ll want to use something like the following code. Make sure to replace the PHP version with whatever you are using on the client’s server, and URLs/FTP servers with the actual client site (production and staging) URLs/FTP servers.

image: php:7.1.29

pipelines:

branches:

master:

- step:

name: Deploy to production

deployment: production

script:

- apt-get update

- apt-get -qq install git-ftp

- git ftp init --user $FTP_username --passwd $FTP_password ftp://ftp.clientsite.com

main-dev:

- step:

name: Deploy to staging

deployment: staging

script:

- apt-get update

- apt-get -qq install git-ftp

- git ftp init --user $FTP_username --passwd $FTP_password ftp://ftp.clientsite.com/staging.clientsite.com

Click Commit file . The Pipelines setup will now get committed and run.

If everything deploys successfully, go back and edit the bitbucket-pipelines.yml file (you can get there through Pipelines > Settings and View bitbucket-pipelines.yml ). You’ll want to replace both places where it says git ftp init with git ftp push and save/commit. This will ensure that only changed files are uploading, thus saving you build minutes. Your bitbucket-pipelines.yml file should now read:

image: php:7.1.29

pipelines:

branches:

master:

- step:

name: Deploy to production

deployment: production

script:

- apt-get update

- apt-get -qq install git-ftp

- git ftp push --user $FTP_username --passwd $FTP_password ftp://ftp.clientsite.com

main-dev:

- step:

name: Deploy to staging

deployment: staging

script:

- apt-get update

- apt-get -qq install git-ftp

- git ftp push --user $FTP_username --passwd $FTP_password ftp://ftp.clientsite.com/staging.clientsite.com

Add a branch called main-dev .

On Your Local Machine

Clone the repository into an empty directory you’d like to use for the local installation. Check out the main-dev branch.

Set up a local WP install in this directory. There are many tools for this—Local by Flywheel, MAMP, Docker, etc. Make sure everything is the same (WordPress version, PHP version, Apache/Nginx, etc.) as what is running on the client’s server.

Add a .gitignore that looks something like this. Essentially we want to have Git ignore everything except wp-content (to prevent installation issues between installs). You may also want to add your own rules for ignoring large backup files and system-created icon and DS_Store files.

# Ignore everything

*

# But not .gitignore

!*.gitignore

# And not the readme

!README.md

# But descend into directories

!*/

# Recursively allow files under subtree

!/wp-content/**

# Ignore backup files

# YOUR BACKUP FILE RULES HERE

# Ignore system-created Icon and DS_Store files

Icon?

.DS_Store

# Ignore recommended WordPress files

*.log

.htaccess

sitemap.xml

sitemap.xml.gz

wp-config.php

wp-content/advanced-cache.php

wp-content/backup-db/

wp-content/backups/

wp-content/blogs.dir/

wp-content/cache/

wp-content/upgrade/

wp-content/uploads/

wp-content/wflogs/

wp-content/wp-cache-config.php

# If you're using something like underscores or another builder:

# Ignore node_modules

node_modules/

# Don't ignore package.json and package-lock.json

!package.json

!package-lock.json

Save and commit .gitignore .

Make changes and commit accordingly.

Any time you commit to main-dev, it will fire an FTP upload to the staging site. Any time you commit to master, it will fire an FTP upload to the production site. Note that this will use build minutes, so you might want to do most local changes on a branch off of main-dev, then merge to main-dev once you’re done for the day.

Merging main-dev into master will bring all staging changes live. You can check the status of Pipelines and Deployments on the repo on Bitbucket.org.

Syncing WordPress Databases Across Installations

Note that the above will only sync files (themes, plugins, etc). Syncing the database between production and staging becomes a different matter, as often clients are making changes on the live site that are not reflected on the staging site, and vice versa.

For syncing databases across WordPress installations, several options exist. Traditionally, you can update databases by importing/exporting via phpmyadmin . This is tricky though, as it can not update certain things that need to be updated, like permalinks in post content. Using this method, a favorite tool is the Velvet Blues Update URLs plugin, which you can then use to search/replace any instances of the old site URL (e.g., https://staging.clientsite.com) to the new site URL (e.g., https://clientsite.com). You can also use this with relative paths and strings. This method ends up leaving a lot of room for human error—if a replaced string is written wrong, it can cause the entire site to break and not be able to use the plugin/access the dashboard.

While a plugin like All-in-One WP Migration offers a search/replace feature out of the box and is incredibly user-friendly, it also brings over files, thus undoing the values of our whole Pipelines workflow. Plus, since it reimports all of the wp-uploads, it can result in huge files and loading times (thus it is unfit for moving changes across installations). A plugin like this is best reserved for backups of the entire site for archival/security purposes.

VersionPress seems like an interesting solution, but it is not proven in a lot of production environments yet. For now, plugins like WP Sync DB or WP Migrate DB Pro seem to be the best solutions for database management. They allow for pulling/pushing databases across installations while giving the option to automatically update URLs and paths. They can migrate only certain tables, like wp_posts for post content only, not wasting time on reimporting users and sitewide settings. I like to always pull from live to make sure no production data is getting overwritten. Here’s an example setup if you are using WP Sync DB (more walkthroughs available on the WP Sync DB github):

- On the live site: Set up WP Sync DB with “Allow Pull from this repository” setting enabled.

- On the staging site: Set up WP Sync DB with Pull from Live settings. Name it “live-to-staging.”

- On your local dev setup: Set up WP Sync DB with Pull from Live settings. Name it “live-to-dev.”

You may also want to set up a pushing “dev-to-staging” rule, and check the staging site setting to allow the database to be overwritten.

Wrapping Up

I’ve found these methods tend to work for most use cases, both in developing a new WordPress website and for redesigning/refiguring an already-live site.

It allows for code deployments that keep all site versions up to date with no added dev time/effort and intentional, safe database migration logic for working between sites. Updating plugins is done within the source control as well, so plugin updates can be safely checked on staging before committing to the live site, thus minimizing production site breaks.

While there is certainly room for improvement to bring more of a source control workflow to database management, until a tool like VersionPress is used more in production environments this method of selective pulls/pushes of the database via WP Sync DB or WP Migrate DB Pro seem to be the most secure method of dealing with this. Curious to hear what works for your WordPress workflow, or if after all of this you’d rather just grab your lasso and cowboy code it!

SQL

SQL

HTML/CSS/JS

HTML/CSS/JS

Coding

Coding

Settings

Settings Logout

Logout